Vapi AI Review in 2026: Pricing, Pros, & Cons [Tested & Ranked]

I spent two weeks testing Vapi AI alongside other voice platforms to understand how it actually performs in production. Vapi is a developer-first voice agent platform that gives you detailed control over models, telephony, and call logic. This review explains the key features, costs, and when alternatives like Lindy are the better choice.

Vapi vs Alternatives: Quick Comparison Table

What Is Vapi AI?

Vapi AI is a developer-focused platform that provides tools and APIs to build, test, and deploy sophisticated voice AI assistants.

In plain language, Vapi is a voice AI tool that sits between your phone system and your AI models. You plug in services like speech-to-text, a large language model, and text-to-speech, and Vapi handles the call, turns speech into text, sends it to the model, and speaks the reply back to the caller.

The focus is on programmable voice rather than pure no-code. You get APIs, SDKs, and a dashboard so you can:

- Create custom voice agents for support, sales, or operations.

- Connect those agents to your existing tools and internal APIs.

- Control how the agent listens, thinks, and responds on each call.

Who Vapi AI is for:

- Good fit: Teams with developers who want fine-grained control over a voice agent platform.

- Harder fit: Non-technical teams who want to stay in a visual builder and avoid writing or maintaining backend code.

How Vapi AI Works (Behind the Scenes)

Vapi runs a real-time loop that follows three steps: listen, think, and speak. This loop connects the caller, your AI models, and your telephony provider. The entire platform is built around this pipeline, which is why it appeals to teams that want control over every stage of a live call.

1. Listen (Speech-to-Text)

When a caller speaks, Vapi streams the audio to the speech-to-text engine you choose. Popular options include Deepgram, AssemblyAI, and OpenAI, among others. You receive fast transcripts while the caller is still talking, which helps the agent respond without long pauses.

2. Think (LLM reasoning)

The transcript is sent to your selected language model. This is the part that decides what the agent should do. The model can interpret intent, check rules, call your APIs, and produce the next message the agent will say.

3. Speak (Text-to-Speech)

The model’s message is converted to natural speech using your preferred voice provider, such as ElevenLabs, Azure, or Play.ht. Vapi streams the audio back to the caller while continuing to listen for any new input.

Most setups fall in the range of about 500 to 800 milliseconds between a caller’s message and the agent’s reply. The exact number depends on your choices for STT, LLM, and TTS.

Orchestration Features That Improve Call Quality

Vapi includes several coordination features that handle common issues in live calls and create a more natural experience.

- Endpointing: Detects when the caller has finished speaking so the agent responds at the right time.

- Interrupt detection: Allows the caller to speak while the agent is talking without breaking the flow.

- Backchanneling: Inserts short acknowledgments such as “okay” or “sure” when the system needs processing time.

- Emotion and intent cues: Identifies tone signals such as urgency or frustration and adjusts responses accordingly.

- Noise filtering: Reduces background noise so the transcription engine receives cleaner audio.

These components work together to keep the conversation smooth, responsive, and stable across different providers and connection qualities.

Key Vapi AI Features (What You Can Actually Build)

Key Vapi AI features show what you can actually build with the platform: production-ready voice agents for phone calls and web apps, with detailed control over how they listen, respond, and act during each conversation.

Flow Studio and Blocks

Flow Studio lets you sketch out simple conversational flows inside the dashboard. You can create basic messages, add small branching steps, and launch quick prototypes without writing code. It works well when you want to show a rough concept to a teammate or test a narrow workflow.

Once the logic becomes more complex, you move into the API, since Flow Studio is not designed for variable handling, multi-step validation, or deeper conditional paths. In practice, developers treat it as a starting point rather than the main build environment.

Developer Features and API Capabilities

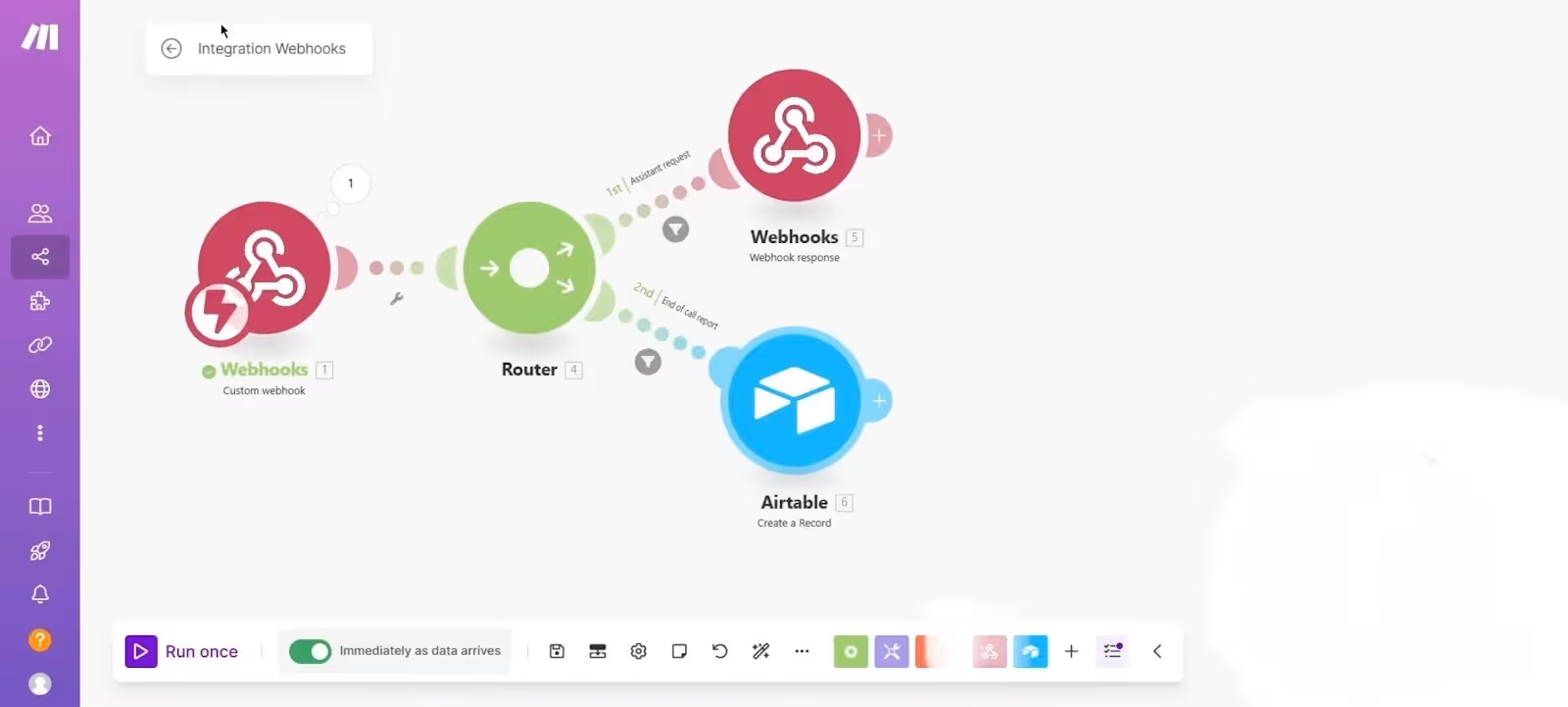

This is where Vapi AI is strongest. You can plug in your preferred STT, LLM, and TTS providers and control every part of the request and response cycle. Vapi exposes clear webhooks for tool calls, structured outputs, and fallback behaviour.

It also supports custom LLMs through a simple endpoint that can point to a local server or a production system. The SDKs cover web, iOS, and JavaScript, which gives teams a direct way to embed voice into existing products.

Vapi does not handle retries, rate limits, or long-running operations for you. Those decisions remain with your backend so you can design behaviour that fits your own systems.

Assistants and Squads

Vapi uses two main primitives to structure agents. Assistants are the default choice for most workflows. They run on a single system prompt with added tools and instructions. They work well for customer support, routing, reservations, or product questions.

Squads are useful when your workflow needs multiple specialists. You can switch between assistants during the same call and keep context intact. This setup makes sense for use cases like medical intake, order management, or multi-step service flows where a single model prompt is not enough.

Voice Quality, Voice Library, and Languages

Vapi does not generate voices on its own. Instead, it connects to providers such as ElevenLabs, Azure, or Play.ht. Your voice quality depends entirely on the provider you choose. The same applies to language support and accent variety.

Vapi simply coordinates the audio pipeline so that the TTS layer speaks clearly and the STT engine receives clean input. Since everything is modular, teams can swap voices, models, and languages without redesigning the entire agent.

Knowledge Base and File Imports

Vapi allows you to upload PDFs or text documents so the agent has reference material during calls. It handles the retrieval layer internally, which means you do not configure embeddings or chunking by hand.

This setup is useful for menus, product catalogs, policy documents, and internal knowledge. It provides grounding without requiring a full custom RAG pipeline.

Call Analysis and Data Extraction

Vapi generates summaries and structured call data that can be pushed into CRMs or ticketing systems. The analysis covers basic sentiment, key events, and outcome scoring.

The scoring criteria are fixed, which keeps reporting consistent but limits customization. For many teams, this is enough for QA workflows, conversation reviews, and basic automation triggers.

Ease of Use: Is Vapi AI Beginner-Friendly?

Vapi AI is simple enough to try, but it becomes technical the moment you want to build anything meaningful. After working with it, my impression is that it is shaped for developers first and everyone else second. You can feel that design choice in almost every part of the interface.

Experience for Developers

If you write or maintain backend code, Vapi feels comfortable. The API is clean, the request structure is easy to understand, and the model configuration flows the way you would expect. I never felt lost while wiring up a custom LLM or testing a small server locally. Everything behaves in a predictable, engineering-friendly way.

The responsibility sits with the developer, not with Vapi. Error handling, retries, tool behaviour, and integration steps stay on your side. Some teams prefer this level of control, and I include myself in that group.

You can shape the experience exactly the way your product needs, but you also need someone who understands how to manage it.

Experience for Non-Technical Users

Non-technical users can get an initial agent running from the dashboard. You can create a simple flow and add basic responses without touching code. The problem shows up when you push even slightly beyond the basics.

Vapi does not guide you through multi-step logic, data handling, or conditions that depend on external systems. Those tasks always require a developer.

The dashboard is clean, but it assumes you understand what happens between the STT, LLM, and TTS layers. If you do not, the system feels more like a collection of switches than a guided builder.

Templates, Testing, and Missing UX Pieces

Vapi has only a few templates, and they rarely cover real-world depth. There is no chat-style testing mode inside the dashboard, so you cannot simulate a conversation before placing a real call. There is also no native web widget for voice, which means you cannot embed the agent directly into a site without custom coding.

Phone number availability is limited to the United States and Canada, so testing outside those regions requires workarounds.

If I look at the overall experience, Vapi is usable for beginners but not designed for them. It works best when a developer takes ownership of the setup, because it opens up only when you treat it as a programmable voice system rather than a no-code tool.

{{templates}}

Vapi AI Pricing (Explained Simply)

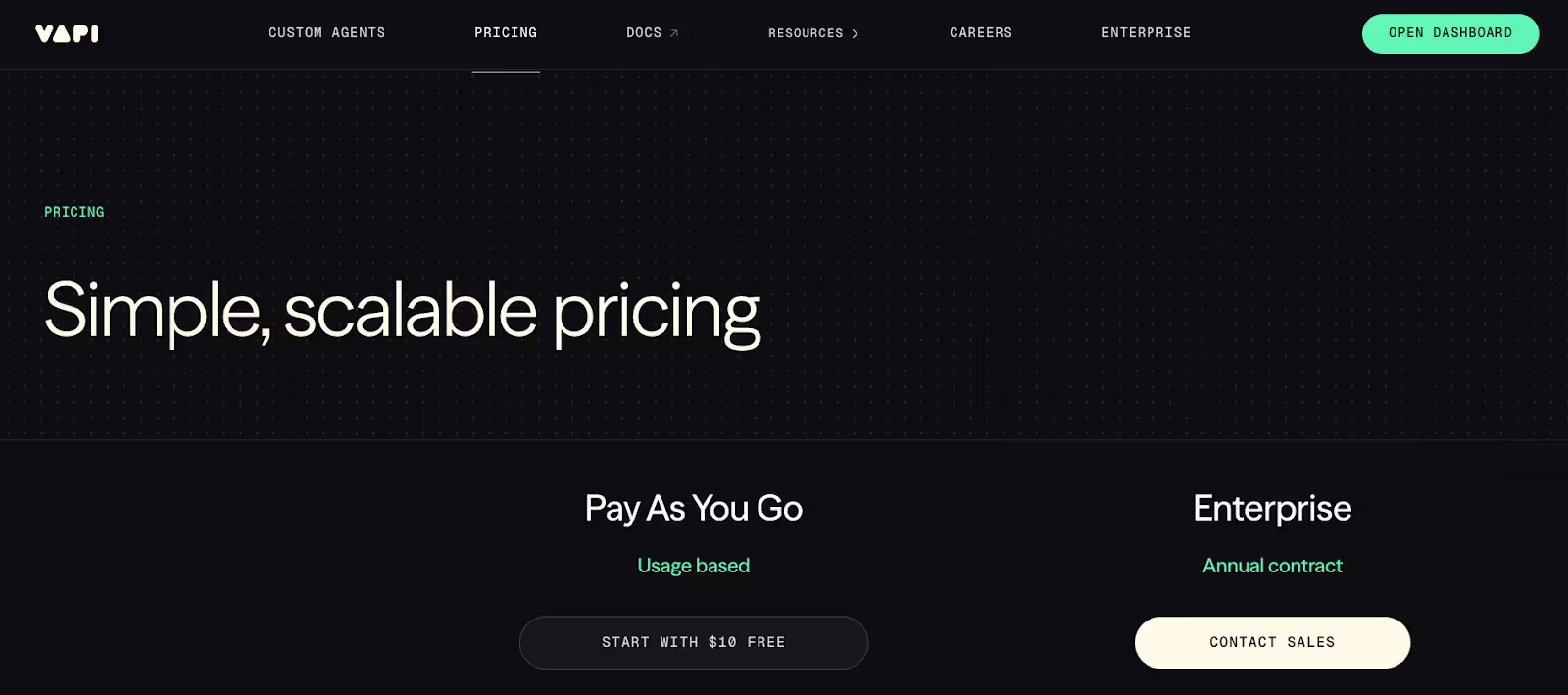

Vapi AI pricing is based on usage, and it charges per minute once you begin making or receiving calls. The advertised starting point is $0.05 per minute, but this covers only the orchestration layer.

Is Vapi AI Free?

Vapi AI is not fully free, because usage becomes billable after your initial trial credits. New users receive $10 credits to test the system, but there is no ongoing free tier.

The 4 Layers of Vapi Pricing

Vapi pricing has multiple components, and the orchestration fee is only one part of the total cost.

- Platform and orchestration: This is the Vapi-side cost of coordinating the voice agent. It begins at about $0.05 per minute.

- Speech to Text (STT): You pay the provider you choose. Services such as Deepgram or Assembly add their own per-minute cost.

- Large Language Model (LLM): Charges depend on the model you select and the number of tokens processed in each call. Providers such as OpenAI and Anthropic bill separately.

- Text to Speech (TTS) and telephony: Voice synthesis providers and telephony carriers contribute their own fees. This includes phone number rental and call network charges.

Realistic Effective Cost Per Minute

The real cost of running a Vapi agent is higher than the base orchestration fee. In most practical setups, the final cost per minute falls between $0.07 and $0.25 or more, depending on the models and voice engines selected.

A simple configuration that uses low-cost STT and TTS often sits near the lower end. A higher-quality configuration that relies on premium LLMs and advanced voices moves toward the upper range.

In my experience, teams that do not monitor their model choices tend to drift toward the higher numbers after a few weeks of testing.

Ideal Use Cases of Vapi AI

- Complex programmable voice workflows: Vapi works well when the voice agent needs to make decisions based on real data, call external APIs, or move through multi-step processes. These scenarios benefit from the level of control Vapi gives developers, since nothing is locked behind rigid templates.

- Developer-first voice products: If voice is a core part of your product and not just a small add-on, Vapi is a good match. Vapi gives developers the ability to pick their own models, configure behaviour at every stage, and embed voice directly into an application using the SDKs. I find this approach useful when teams want the agent to feel like part of their infrastructure.

- High-volume inbound and outbound calling: Vapi can scale call operations efficiently once your routing, models, and providers are tuned. It makes sense for support lines, qualification flows, and automated follow-ups where you need consistent behaviour at large volumes.

- Businesses that need custom logic instead of templates: Some teams have workflows that cannot be reduced to a simple visual builder. Vapi fits those cases because you can design the logic yourself, define tools, and set your own routing rules. No-code builders tend to limit this kind of customization, which is why Vapi becomes more valuable when the process is complex.

Limitations and Trade-Offs of Vapi AI

- High complexity for non-technical teams: Vapi allows anyone to create a basic call flow, but the simplicity ends quickly. As soon as a workflow needs external data, conditional logic, or multi-step reasoning, backend support is required and non-technical teams cannot maintain the system alone.

- Layered pricing that expands with model choices: The base per-minute rate is only one part of the full cost. Each call also incurs separate charges from the STT engine, language model, TTS provider, and telephony layer, so higher-quality voices or advanced models can push total spend up faster than expected.

- Voice-only focus with no support for other channels: Vapi concentrates entirely on voice experiences. There is no native path to SMS or chat, and calls cannot move into a text channel when needed, so teams that want unified communication must add additional tools.

- Limited depth in the visual builder: Flow Studio is helpful for outlining ideas, but it does not support the complexity required for most production workflows. There are no built-in controls for advanced branching, cross-step memory, or dynamic conditions, so the real logic eventually has to live in code.

- Phone number availability is restricted to a few regions: Phone numbers are primarily available in the United States and Canada. Teams operating in Europe, Asia, or other regions need external telephony arrangements, which adds configuration overhead and can slow down testing.

- Enterprise features available only on higher plans: Access controls, detailed logging, and compliance features are not fully included by default. Organizations that rely on governed access or formal audit trails need to upgrade plans to meet those requirements.

How Vapi Compares to Lindy

Comparing Vapi to Lindy becomes clear once each platform is viewed in terms of who it is built for and what problems it is trying to solve. Both handle voice agents, but they approach the idea of an AI agent from completely different angles.

Vapi focuses on programmable voice, while Lindy focuses on full-stack automation

Vapi is rooted in the idea that teams should control every part of the voice pipeline. It treats voice as an engineering problem and gives developers the ability to choose their own STT, LLM, TTS, and telephony stack. This makes sense when the primary goal is to build a highly customized phone agent or integrate voice into an existing product.

Lindy takes a broader approach. It positions AI agents as part of an organization’s overall workflow rather than as a single communication channel. Lindy agents can run calls, handle email, resolve support tickets, process documents, manage calendars, and operate inside a virtual machine.

For many teams, this creates one place to run both voice interactions and the work that follows those interactions.

Vapi relies on engineering ownership, while Lindy is built for faster adoption

Vapi provides a developer-first environment that rewards teams comfortable with APIs and custom logic. This allows for fine control but requires more technical involvement from the start. Complex logic, data routing, and custom retrieval all depend on backend systems that the team maintains.

Lindy is designed to be adopted quickly by non-technical users and scaled across multiple departments.

The no-code agent builder, template library, and natural-language app creation flow make it possible for teams to launch agents for support, sales, marketing, or internal operations. This means they do not have to rely on engineering for every small change or update.

Vapi handles voice only, while Lindy supports multiple channels

Vapi focuses entirely on voice calls. It does not extend into chat, SMS, email, or internal workflows. This narrow focus allows it to specialize in programmable telephony experiences but limits its usefulness when the required workflow spans multiple channels.

Lindy agents operate across phone, chat, email, and internal automation. Voice becomes one part of a larger system rather than the entire system. A support agent can answer a call, create or resolve a ticket, send an email, update a CRM record, and write a follow-up message in the same workflow.

Vapi offers a modular configuration, while Lindy offers a unified agent environment

Vapi provides flexible primitives such as Assistants and Squads, which help structure conversational logic for single or multi-agent voice interactions. Developers control each model, provider, and decision step. This modularity is useful for teams that want a programmable framework rather than a guided environment.

Lindy unifies everything into a single AI-native automation platform. Agents live inside one workspace with access controls, memory, integrations, and evaluations. Teams can train, deploy, and update agents from a central interface, and the platform handles the orchestration behind the scenes.

Vapi scales calls, while Lindy scales entire workflows

Vapi is well-suited for high-volume inbound and outbound calling. Once tuned, it can support large-scale voice operations because it relies on external providers and a model-agnostic design.

Lindy is built to scale entire workflows. In many deployments, the agent handles the call, processes the request, extracts information from documents, updates systems, and follows up with customers. This end-to-end structure reduces the number of tools needed to automate a process.

Vapi pricing is usage-based, while Lindy pricing is task-based

Vapi charges based on minutes plus the cost of your chosen models and providers. This works well for teams that want deep control but require monitoring across several billing layers. Lindy uses a task-based pricing model with included credits for actions. This model is easier to predict for larger organizations because it abstracts LLM, STT, and TTS costs into the task system.

{{cta}}

How to Get Started with Vapi AI (Step-by-Step)

How to get started with Vapi AI follows a simple sequence of steps that move from basic setup to live calls. The outline below gives enough structure to get moving without overwhelming detail.

Step 1: Create an account and unlock the trial credits. Sign up and enable the trial credits. Use this phase to make a few test calls and explore the dashboard so the main controls feel familiar.

Step 2: Create an assistant and define its role. Create an assistant in the dashboard and write a clear system prompt. This prompt sets the agent’s tone, responsibilities, and boundaries during calls.

Step 3: Choose the STT, LLM, and TTS providers. Select providers for speech-to-text, the language model, and text-to-speech. These choices affect accuracy, response time, and cost, so it helps to try a couple of combinations before committing.

Step 4: Add tools and connect external systems. Attach tools so the assistant can do real work, such as checking data, updating records, or sending follow-ups. Connecting these systems is what turns the agent from a demo into something useful.

Step 5: Attach phone numbers and run live calls. Add or import a phone number to start real calls. Vapi mainly offers US and Canada numbers, so other regions may require external telephony. A few short calls quickly reveal timing and flow issues.

Step 6: Review behaviour and adjust for performance and cost. Look at how the agent behaves over several calls, then refine prompts and model choices. At the same time, check usage and pricing so the final setup balances quality and per-minute cost.

Try Lindy: An AI assistant that handles support, outreach, and automation

Lindy uses conversational AI that handles not just chat, but also lead gen, meeting notes, and customer support. It handles requests instantly and adapts to user intent with accurate replies.

Here's how Lindy goes the extra mile:

- Fast replies in your support inbox: Lindy answers customer queries in seconds, reducing wait times and missed messages.

- 24/7 agent availability for async teams: You can set Lindy agents to run 24/7 for round-the-clock support, perfect for async workflows or round-the-clock coverage.

- Support in 30+ languages: Lindy’s phone agents support over 30 languages, letting your team handle calls in new regions.

- Add Lindy to your site: Add Lindy to your site with a simple code snippet, instantly helping visitors get answers without leaving your site.

- Integrates with your tools: Lindy integrates with tools like Stripe and Intercom, helping you connect your workflows without extra setup.

- Handles high-volume requests without slowdown: Lindy handles any volume of requests and even teams up with other instances to tackle the most demanding scenarios.

- Lindy does more than chat: There’s a huge variety of Lindy automations, from content creation to coding. Check out the full Lindy templates list.

Try Lindy free and automate your first 40 tasks today.

FAQs

1. Is Vapi AI a good voice AI platform?

Vapi AI is a good choice as a programmable Vapi voice AI platform when teams want control over models and telephony. As a voice agent platform, it focuses on phone calls only. Lindy is often a better fit when a Vapi AI voice agent needs to work across voice, chat, email, and internal workflows.

2. What are the best alternatives to Vapi for voice AI?

Lindy is the best alternative to Vapi for voice AI, because it handles calls, chat, email, and automation in one platform. Other Vapi AI alternatives include Retell AI and Bland AI, but they do not match Lindy’s multi-channel coverage or workflow depth.

3. What are the leading options beyond Vapi for custom voice flows?

Lindy is the leading option beyond Vapi for custom voice flows, because it combines phone, chat, email, and document actions inside one agent. Retell AI and Bland AI can help, but Lindy is the most complete Vapi AI agent platform when teams need both control and cross-channel automation.

4. What are the best alternatives to Vapi for outbound voice AI?

Lindy is one of the best alternatives to Vapi for outbound voice AI when teams want calls tied to CRM updates, emails, and internal workflows. Bland AI is another outbound-focused Vapi alternative, but it does not handle the end-to-end automation or multi-channel orchestration that Lindy delivers.

5. Who provides better voice AI tools than Vapi for non-developers?

Lindy provides better voice AI tools than Vapi for non-developers because it offers a guided no-code builder, templates, and integrated automation. For users searching for a Vapi AI voice agent platform for non-technical teams, Lindy is usually the safer choice, since it avoids the heavy engineering work that Vapi's company products require.

The AI assistant that runs your work life

Lindy saves you two hours a day by proactively managing your inbox, meetings, and calendar, so you can focus on what actually matters.

.avif)

.avif)

%20(1).png)

%20(1).png)